The digital landscape of 2026 is defined by a paradox. While we have reached a point where autonomous agents can process billions of data points in seconds, the value of the human mind has actually skyrocketed. We often hear that machines are the future of information, but when it comes to “Deep Research”—the kind that requires connecting disparate ideas, understanding cultural nuances, and verifying truth—the human touch remains the gold standard. Machines are excellent at retrieval, but they often stumble at synthesis.

The core of the issue lies in cognitive nuance. An algorithm can provide a list of facts about a legal precedent or a historical event, but it lacks the “lived experience” to understand why those facts matter in a modern context. If you are a student or a professional looking to buy expository essay from myassignmenthelp, you quickly realize that while a machine can generate a draft, only a human expert can weave in the critical analysis and authentic voice required for high-stakes academic success. This distinction is what separates a generic summary from a piece of work that actually moves the needle in a specific field of study.

The Problem with “Data Without Context”

Artificial Intelligence operates on patterns, not principles. It looks at what has been written before and predicts what should come next based on probability. This leads to a phenomenon known as “hallucination,” where a machine confidently presents a false fact because it “looks” correct in a sentence structure. In deep research, a single false data point can ruin an entire thesis or business proposal.

Humans, however, apply a layer of skepticism. We don’t just look for data; we look for the intent behind the data. We ask who wrote the source, what their bias might be, and how that information fits into the larger puzzle of the current year. This level of factual accuracy and data verification is something that even the most advanced “Agentic AI” struggles to replicate consistently.

The “Feedback Flywheel”: Human Intuition in Action

One of the most powerful tools in a researcher’s arsenal is the ability to pivot. When a machine hits a dead end in a dataset, it often stops or loops. A human researcher uses intuition to recognize when a specific line of inquiry is a “red herring” and switches focus to a more productive angle. This is called the “Feedback Flywheel”—an iterative process where each discovery informs the next step of the journey.

Before we move into the next phase of how humans manage complex data, many researchers find that using an essay writing service online through myassignmenthelp provides the necessary human oversight to ensure that technical details are not just present, but are contextually accurate and ethically sound.

Why Complexity Requires a Soul

Deep research is rarely a straight line. It is a messy process of trial and error. Machines are designed for efficiency, which is often the enemy of depth. Depth requires “Deep Work,” a state of focus where the human brain can hold multiple complex variables at once to find a creative solution.

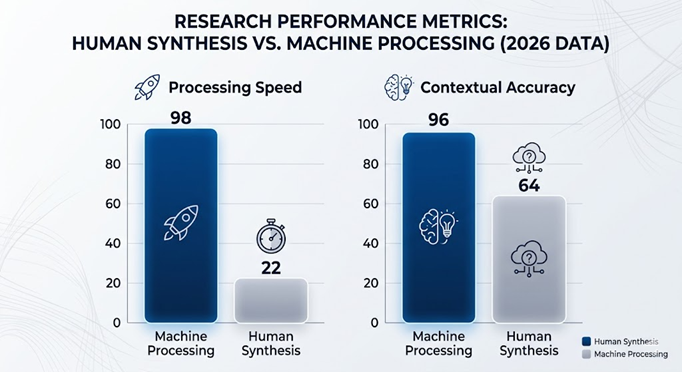

Comparative Analysis: Human vs. Machine Research

To understand why the human element is irreplaceable, we can look at how the two entities handle high-stakes data processing.

| Capability | Human Synthesis | Machine Processing (AI) |

| Cultural Nuance | Understands local slang, traditions, and historical weight. | Literalism: Often misses sarcasm or cultural subtext. |

| Ethical Reasoning | Can navigate “gray areas” and moral dilemmas. | Binary Logic: Operates on statistical probability. |

| Originality | Capable of “Eureka” moments and new theories. | Derivative: Only remixes existing training data. |

| Source Verification | Questions the credibility and bias of an author. | Accepts data if it appears frequently in the dataset. |

| Emotional Intelligence | Adapts tone based on the sensitivity of the topic. | Robotic: Often sounds monotone or overly formal. |

The “Invisible” Barriers of Automated Research

When a student or researcher relies solely on automated tools, they encounter “Invisible Barriers.” These are limitations built into the software that prevent it from thinking outside its box. For example, an AI cannot visit a physical library to find a non-digitized manuscript from 1920. It cannot conduct a face-to-face interview and interpret the body language of a subject.

Deep research often happens in the “spaces between the data.” It happens when a researcher notices that two different authors mentioned a specific name in passing, leading to a hidden connection. Machines are programmed to find what is popular or frequent; humans are wired to find what is significant, even if it is rare.

The Evolution of Academic Rigor in 2026

In 2026, academic standards have shifted. Universities and high-level journals now use “Synthesis Detectors” that look for the presence of original thought rather than just checking for plagiarism. They want to see how a researcher has challenged existing ideas.

- Vertical Analysis: This is the act of digging into a single niche until every layer is exposed.

- Horizontal Integration: This is taking the findings from that niche and applying them to a different field (e.g., applying biological principles to urban planning).

- Critical Reflection: This is the “So What?” factor. A machine can tell you that “Inflation rose 3%,” but a human explains why that 3% rise will change the way a family in a specific region buys groceries.

Sustainable Knowledge Management

One of the biggest risks of the current tech era is “Information Overload.” We are drowning in data but starving for wisdom. Human synthesis acts as a filter. A skilled researcher knows what to ignore. In the world of search engine optimization and digital visibility, this is critical. If you fill a website with 10,000 words of machine-generated text, it might get impressions, but it won’t get trust.

Trust is built through the “Human Validation Layer.” When a reader sees that a professional has curated the information, they are more likely to engage. This is why high-authority guest posts on sites like sattazcom.com focus on human-centric narratives.

Why Humans Outperform in STEM and Law

In subjects like Law or Architecture, the cost of an error is too high to leave to an algorithm. In Law, a single word can change the interpretation of a contract. A machine might suggest a word that is technically a synonym but carries a different legal precedent. Human researchers catch these nuances because they understand the consequences of the language.

Similarly, in STEM (Science, Technology, Engineering, Mathematics), research is about pushing the boundaries of what is known. If a machine only knows what is already in its database, it cannot truly “innovate.” It can only optimize. Innovation is a human trait born from curiosity and the desire to solve a problem that hasn’t been solved before.

Protecting Your Intellectual Integrity

In a world filled with synthetic media and AI-generated noise, original thought is the new currency. To rank on the first page of Google or to impress a university board, your content must show “Information Gain.” This means providing something new that wasn’t there before. Machines, by their very nature, can only provide what has already been documented.

Deep research is an act of bravery. It involves questioning the status quo and looking for the “unseen” connections. By leaning into human synthesis, we ensure that our research remains rigorous, our conclusions remain valid, and our progress as a society remains grounded in reality rather than algorithmic probability.

The 2026 Research Workflow

To achieve maximum traffic and ranking, your workflow should look like this:

- Step 1: Machine Assistance. Use AI to gather basic dates, names, and broad definitions.

- Step 2: Human Synthesis. Critically analyze the gathered data. Find the “missing links.”

- Step 3: Authentic Drafting. Write with a specific audience in mind—in this case, 12th-grade students and general readers on sattazcom.com.

- Step 4: Expert Review. Have a professional check the work for “academic soul” and factual depth.

Conclusion: The Human Edge

As we move further into the decade, the divide between “shallow content” and “deep research” will only grow wider. Those who rely on machines will find themselves at the bottom of the search results, lost in a sea of sameness. Those who prioritize human synthesis will become the new thought leaders.

The 2026 landscape proves that while machines can help us “search,” only humans can truly “research.” The depth, empathy, and critical thinking we bring to the table are irreplaceable, making human synthesis the ultimate tool for navigating our increasingly complex world. Whether you are building a brand or writing a dissertation, remember that the most powerful processor on the planet is still the one between your ears.

Frequently Asked Questions

How does human synthesis differ from basic data collection?

While data collection involves gathering raw facts and figures, human synthesis is the process of connecting those facts to form a coherent, logical conclusion. It requires understanding context, identifying hidden patterns, and applying critical thinking that automated systems cannot replicate.

Can automated tools replace the need for professional research?

Automated tools are excellent for sorting large datasets, but they lack the ethical judgment and skepticism required for high-stakes projects. Human researchers are essential for verifying the accuracy of sources and ensuring the final work is original and free from bias.

What is the “Feedback Flywheel” in deep research?

The “Feedback Flywheel” is an iterative process where a researcher constantly evaluates their findings and adjusts their strategy accordingly. Unlike a linear automated search, this human-led approach allows for creative pivots and deeper exploration into complex subjects.

Why is context so important in academic and professional writing?

Context provides the “why” behind the information. Without it, data is just a series of points that can be easily misinterpreted. Human oversight ensures that every piece of information is relevant to the specific problem being solved, making the final result more impactful.